Analysis of set of rules complexity is the technique of evaluating the computational sources, which includes time and space, required by means of an algorithm to resolve a hassle for a given input size. This evaluation involves estimating the amount of time and reminiscence resources that an algorithm would require to solve a given hassle, as a feature of the dimensions of the input information.

1. Computational Complexity:

Computational complexity is the quantity of computational assets, which includes time and space, required via an algorithm to solve a hassle as a function of the dimensions of the enter statistics. The computational complexity of an set of rules is usually expressed as a function of the enter size n, denoted as T(n) for time complexity and S(n) for area complexity.

2. The Order of Notation:

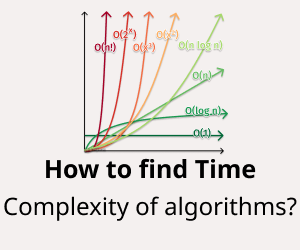

The order of notation, also known as the large-O notation, is a mathematical notation used to describe the asymptotic conduct of a feature as the input length strategies infinity. The massive-O notation is used to express the higher sure of a function’s increase rate, which is beneficial for reading the worst-case time or area complexity of an algorithm.

3. Rules for using the Big-O Notation:

There are several rules for using the big-O notation when analyzing the time or space complexity of an algorithm. These include:

- The big-O notation ignores lower-order terms and constants, focusing only on the dominant term that determines the function’s growth rate.

- The big-O notation considers the worst-case behavior of the algorithm, which is useful for analyzing the upper bound of the algorithm’s time or space complexity.

- The big-O notation can be used to compare the time or space complexity of two algorithms and determine which is more efficient for a given problem.

4. Worst and Average Case Behavior:

The worst-case conduct of an algorithm is the most amount of time or space required via the algorithm to clear up a problem for a given input length. The worst-case conduct is generally the focus of complexity analysis because it affords an higher sure on the algorithm’s performance. The average-case conduct of an algorithm is the anticipated quantity of time or space required through the set of rules to solve a problem for all possible inputs of a given size. The common-case behavior is beneficial for analyzing the performance of an algorithm in realistic situations wherein the input facts won’t continually be within the worst-case state of affairs.